A few weeks ago I listened to Scott Guthrie discuss mobility and how they relate to Azure, and vice versa. I knew a bit about the mobile pieces, but the Azure side of the talk was pretty jammed up with new/updated services and offerings from Azure. This platform has really come a long way in the last fours years for sure.

One of his first Azure talking-points was about IaaS and what it could mean to developers. Enter TitanFall.

He discussed some of the elastic infrastructure the development and program folks were using to prop this game up – all over the world. It was a pretty amazing aspect. From this part of the talk we heard the quotes “Deploy at the Speed of Light – on your terms” and “Compete in a global market, but close to your customers”, “constantly available resources”.

All of those Azure or not, have a very nice ring to them – and true from what we saw in the TitanFall highlights. So from what I can tell from the TitanFall preview, the size of your application isn’t as large of a problem like it has been in the past; IMHO the understanding of the architecture needs to really matter – how do all of the Legos fit together and if they talk to each other at all – who talks to who, when, and why. That statement holds water in a lot of scenarios, today and yesterday however I think it fits in with future goals we set for our solutions today.

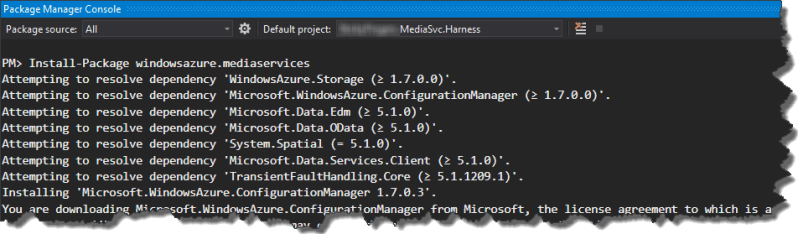

TitanFall has a lot of “headroom” to grow into, and the development tools are pretty sweet at this stage. And Scott promised they’d get better and better as time goes on. Awesome! If you’ve used the older versions of the Windows Azure portal, SDKs, and Visual Studio integrations, you know they’ve all matured into things that remove friction from our daily development goals. The notion they’ll mature more is even more awesome (yes I use the word awesome quite often).

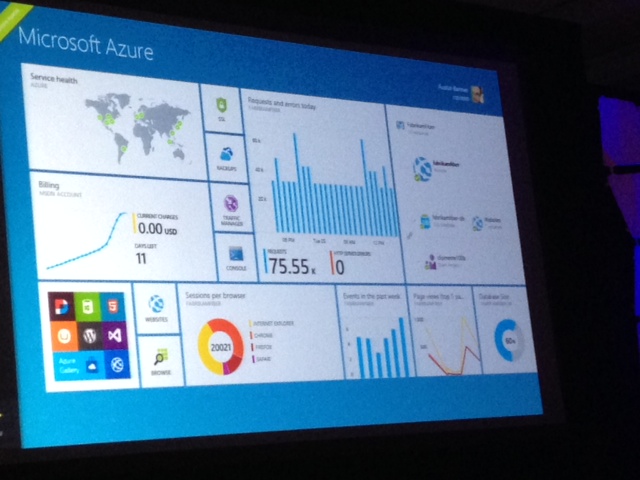

The statistics he shared from Azure were just as impressive, here’s a few screen shots:

1) The footprint of the data-centers around the world, and a few more coming online soon;

2) Interesting adoption stats ranging from authentication to Visual Studio Online registrations, to requests per second.

3) An updated portal dashboard that displays the development, production, and financial concerns of the portal owner – very slick indeed!

You must be logged in to post a comment.